Resources.StressTests HistoryShow minor edits - Show changes to markup April 24, 2013, at 02:16 PM

by -

Changed lines 1-172 from:

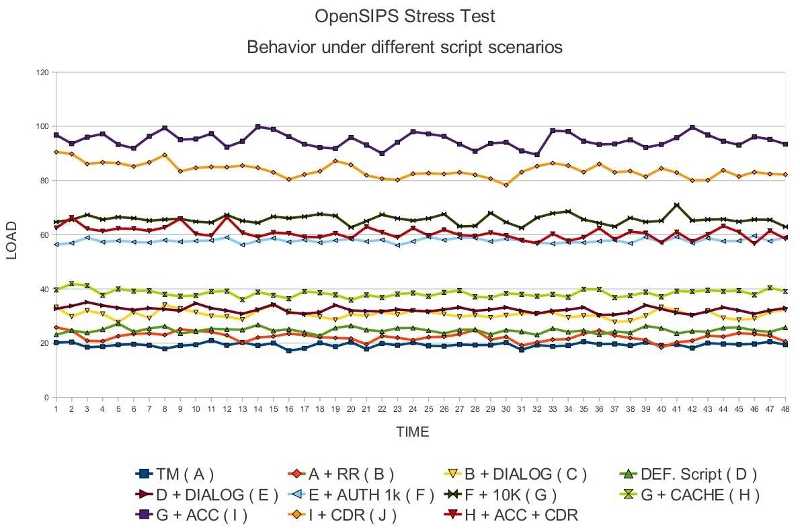

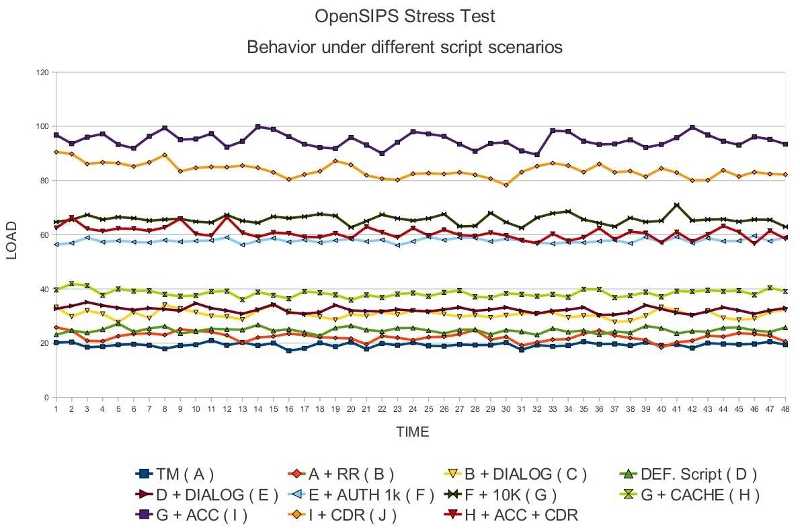

Resources -> Performance Tests -> Stress TestsThis page has been visited 5225 times. (:toc-float Table of Content:) Several stress tests were performed using OpenSIPS 1.6.4 to simulate some real life scenarios, to get an idea on the load that can be handled by OpenSIPS and to see what is the performance penalty you get when using some OpenSIPS features like dialog support, DB authentication, DB accounting, memory caching, etc. What hardware was used for the stress testsThe OpenSIPS proxy was installed on an Intel i7 920 @ 2.67GHz CPU with 6 Gb of available RAM. The UAS and UACs resided on the same LAN as the proxy, to avoid network limitations. What script scenarios were usedThe base test used was that of a simple stateful SIP proxy. Than we kept adding features on top of this very basic configuration, features like loose routing, dialog support, authentication and accounting. Each time the proxy ran with 32 children and the database back-end used was MYSQL. Performance testsA total of 11 tests were performed, using 11 different scenarios. The goal was to achieve the highest possible CPS in the given scenario, store load samples from the OpenSIPS proxy and then analyze the results. Simple stateful proxyIn this first test, OpenSIPS behaved as a simple stateful scenario, just statefully passing messages from the UAC to the UAS ( a simple t_relay()). The purpose of this test was to see what is the performance penalty introduced by making the proxy stateful. The actual script used for this scenario can be found at here . In this scenario we stopped the test at 13000 CPS with an average load of 19.3 % ( actual load returned by htop ) See chart, where this particular test is marked as test A. Stateful proxy with loose routingIn the second test, OpenSIPS script implements also the "Record-Route" mechanism, recording the path in initial requests, and then making sequential requests follow the determined path. The purpose of this test was to see what is the performance penalty introduced by the mechanism of record and loose routing. The actual script used for this scenario can be found here . In this scenario we stopped the test at 12000 CPS with an average load of 20.6 % ( actual load returned by htop ) See chart, where this particular test is marked as test B. Stateful proxy with loose routing and dialog supportIn the 3rd test we additionally made OpenSIPS dialog aware. The purpose of this particular test was to determine the performance penalty introduced by the dialog module. The actual script used for this scenario can be found here . In this scenario we stopped the test at 9000 CPS with an average load of 20.5 % ( actual load returned by htop ) See chart, where this particular test is marked as test C. Default ScriptThe 4th test had OpenSIPS running with the default script (provided by OpenSIPS distros). In this scenario, OpenSIPS can act as a SIP registrar, can properly handle CANCELs and detect traffic loops. OpenSIPS routed requests based on USRLOC, but only one subscriber was used. The purpose of this test was to see what is the performance penalty of a more advanced routing logic, taking into account the fact that the script used by this scenario is an enhanced version of the script used in the 3.2 test . The actual script used for this scenario can be found here . In this scenario we stopped the test at 9000 CPS with an average load of 17.1 % ( actual load returned by htop ) See chart, where this particular test is marked as test D. Default Script with dialog supportThis scenario added dialog support on top of the previous one. The purpose of this scenario was to determine the performance penalty introduced by the the dialog module. The actual script used for this scenario can be found here . In this scenario we stopped the test at 9000 CPS with an average load of 22.3 % ( actual load returned by htop ) See chart, where this particular test is marked as test E. Default Script with dialog support and authenticationCall authentication was added on top of the previous scenario. 1000 subscribers were used, and a local MYSQL was used as the DB back-end. The purpose of this test was to see the performance penalty introduced by having the proxy authenticate users. The actual script used for this scenario can be found here. In this scenario we stopped the test at 6000 CPS with an average load of 26.7 % ( actual load returned by htop ) See chart, where this particular test is marked as test F. 10k subscribersThis test used the same script as the previous one, the only difference being that there were 10 000 users in the subscribers table. The purpose of this test was to see how the USRLOC module scales with the number of registered users. In this scenario we stopped the test at 6000 CPS with an average load of 30.3 % ( actual load returned by htop ) See chart, where this particular test is marked as test G. Subscriber cachingIn the test, OpenSIPS used the localcache module in order to do less database queries. The cache expiry time was set to 20 minutes, so during the test, all 10k registered subscribers have been in the cache. The purpose of this test was to see how much DB queries are affecting OpenSIPS performance, and how much can caching help. The actual script used for this scenario can be found here. In this scenario we stopped the test at 6000 CPS with an average load of 18 % ( actual load returned by htop ) See chart, where this particular test is marked as test H. AccountingThis test had OpenSIPS running with 10k subscribers, with authentication ( no caching ), dialog aware and doing old type of accounting ( two entries, one for INVITE and one for BYE ). The purpose of this test was to see the performance penalty introduced by having OpenSIPS do the old type of accounting. The actual script used for this scenario can be found here. In this scenario we stopped the test at 6000 CPS with an average load of 43.8 % ( actual load returned by htop ) See chart, where this particular test is marked as test I. CDR accountingIn this test, OpenSIPS was directly generating CDRs for each call, as opposed to the previous scenario. The purpose of this test was to see how the new type of accounting compares to the old one. The actual script used for this scenario can be found here. In this scenario we managed to achieve 6000 CPS with an average load of 38.7 % ( actual load returned by htop ) See chart, where this particular test is marked as test J. CDR accounting + Auth CachingIn the last test, OpenSIPS was generating CDRs just as in the previous test, but it was also caching the 10k subscribers it had in the MYSQL database. In this scenario we stopped the test at 6000 CPS with an average load of 28.1 % ( actual load returned by htop ) See chart Load statistics graphEach test had different CPS values, ranging from 13000 CPS, in the first test, to 6000 in the last tests. To give you an accurate overall comparison of the tests, we have scaled all the results up to the 13 000 CPS of the first test, adjusting the load in the same time. So, while on the X axis we have the time, the Y axis represents a function based on actual CPU load and CPS. http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | Test naming convention: Each particular test is described in the following way : [ PrevTestID + ] Description ( TestId ). Example: A test adding dialog support on top of a previous test labeled as X would be labeled : X + Dialog ( Y ) See full size chart

Performance penalty table

Conclusions

to:

(:redirect About.PerformanceTests-StressTests quiet=1:) March 08, 2011, at 02:18 PM

by -

Changed lines 155-156 from:

to:

Changed lines 158-164 from:

to:

March 08, 2011, at 02:15 PM

by -

Changed lines 154-164 from:

to:

March 08, 2011, at 02:13 PM

by -

Changed lines 153-164 from:

to:

March 07, 2011, at 09:27 PM

by -

Added lines 166-167:

Changed line 170 from:

to:

March 07, 2011, at 09:26 PM

by -

Added lines 149-150:

March 07, 2011, at 09:25 PM

by -

Changed lines 151-162 from:

to:

March 07, 2011, at 09:13 PM

by -

Changed lines 152-159 from:

to:

March 07, 2011, at 09:03 PM

by -

Changed lines 158-159 from:

to:

March 07, 2011, at 09:02 PM

by -

Changed lines 152-158 from:

to:

March 07, 2011, at 09:00 PM

by -

Changed lines 152-156 from:

to:

March 07, 2011, at 08:56 PM

by -

Changed lines 152-154 from:

to:

March 07, 2011, at 07:29 PM

by -

Changed lines 152-153 from:

to:

March 07, 2011, at 07:15 PM

by -

Changed lines 152-153 from:

to:

March 07, 2011, at 07:13 PM

by -

Changed line 151 from:

to:

March 07, 2011, at 07:12 PM

by -

Changed lines 150-153 from:

TODO to:

March 07, 2011, at 07:11 PM

by -

Changed line 146 from:

to:

March 07, 2011, at 07:10 PM

by -

Changed line 132 from:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | to:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | March 07, 2011, at 07:10 PM

by -

Added lines 147-150:

Performance penalty tableTODO March 07, 2011, at 07:03 PM

by -

Changed line 45 from:

The actual script used for this scenario can be found here . to:

The actual script used for this scenario can be found here . March 07, 2011, at 06:28 PM

by -

Changed lines 33-34 from:

In the second test, OpenSIPS script implements also the "Record-Route" mechanism, recording the path in initial requests, and then making sequential requests follow the determined path. The purpose of this test was to see what is the performance penalty introduce by the mechanism of record and loose routing. to:

In the second test, OpenSIPS script implements also the "Record-Route" mechanism, recording the path in initial requests, and then making sequential requests follow the determined path. The purpose of this test was to see what is the performance penalty introduced by the mechanism of record and loose routing. Changed lines 43-44 from:

In the 3rd test we additionally made OpenSIPS dialog aware. The purpose of this particular test was to determin the performance penalty introduced by the dialog module. to:

In the 3rd test we additionally made OpenSIPS dialog aware. The purpose of this particular test was to determine the performance penalty introduced by the dialog module. Changed lines 63-64 from:

This scenario added dialog support on top of the previous one. The purpose of this scenario was to determin the performance penalty induced by the dialog module. to:

This scenario added dialog support on top of the previous one. The purpose of this scenario was to determine the performance penalty introduced by the the dialog module. Changed line 101 from:

This test had OpenSIPS running with 10k subscribers, with authentication ( no caching ), dialog aware and doing old type of accounting ( two entries, one for INVITE and one for BYE ). The purpose of this test was to see the performance penalty introduced by having OpenSIPS do accounting. to:

This test had OpenSIPS running with 10k subscribers, with authentication ( no caching ), dialog aware and doing old type of accounting ( two entries, one for INVITE and one for BYE ). The purpose of this test was to see the performance penalty introduced by having OpenSIPS do the old type of accounting. March 07, 2011, at 06:25 PM

by -

Changed line 146 from:

to:

March 07, 2011, at 06:25 PM

by -

Changed line 146 from:

to:

March 07, 2011, at 06:25 PM

by -

Changed lines 146-147 from:

to:

March 07, 2011, at 06:24 PM

by -

Added lines 145-147:

March 07, 2011, at 06:23 PM

by -

Changed line 132 from:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | to:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | March 07, 2011, at 06:23 PM

by -

Deleted lines 144-145:

March 07, 2011, at 06:22 PM

by -

Changed line 140 from:

Example: A test adding dialog on top of a previous test labeled as X would be labeled : to:

Example: A test adding dialog support on top of a previous test labeled as X would be labeled : March 07, 2011, at 06:21 PM

by -

Changed line 146 from:

to:

March 07, 2011, at 06:20 PM

by -

Changed line 140 from:

Example : A test that adds dialog support on top of a previous test labeled as X would appear in the chart as : to:

Example: A test adding dialog on top of a previous test labeled as X would be labeled : March 07, 2011, at 06:18 PM

by -

Changed line 132 from:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | Load Chart to:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | March 07, 2011, at 06:18 PM

by -

Changed line 132 from:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | Load Chart to:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | Load Chart March 07, 2011, at 06:18 PM

by -

Changed line 132 from:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | Load Chart to:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | Load Chart March 07, 2011, at 06:14 PM

by -

Changed lines 136-138 from:

Each particular test is described in the following way : [ PrevTestID + ] Description ( TestId ). to:

Each particular test is described in the following way : [ PrevTestID + ] Description ( TestId ). March 07, 2011, at 06:13 PM

by -

Added line 139:

March 07, 2011, at 06:12 PM

by -

Changed lines 135-136 from:

to:

Each particular test is described in the following way : [ PrevTestID + ] Description ( TestId ). March 07, 2011, at 06:12 PM

by -

Changed lines 135-136 from:

to:

Example : A test that adds dialog support on top of a previous test labeled as X would appear in the chart as : March 07, 2011, at 05:56 PM

by -

Changed line 133 from:

to:

Deleted lines 137-138:

March 07, 2011, at 05:54 PM

by -

Changed lines 132-134 from:

Each particular test is described in the following way : [ PrevTestID + ] Description ( TestId ) Example : A test that adds dialog support on top of a previous test labeled as X would appear in the chart as : to:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | Load Chart Test naming convention:

Deleted lines 138-139:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | March 07, 2011, at 05:52 PM

by -

Changed line 138 from:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | to:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | March 07, 2011, at 05:52 PM

by -

Added line 137:

Added line 139:

March 07, 2011, at 05:51 PM

by -

Changed line 24 from:

In this first test, OpenSIPS behaved as a simple stateful scenario, just statefully passing messages from the UAC to the UAS ( a simple t_relay()). to:

In this first test, OpenSIPS behaved as a simple stateful scenario, just statefully passing messages from the UAC to the UAS ( a simple t_relay()). The purpose of this test was to see what is the performance penalty introduced by making the proxy stateful. Changed lines 63-64 from:

This scenario added dialog support on top of the previous one. The actual script used for this scenario can be found here . to:

This scenario added dialog support on top of the previous one. The purpose of this scenario was to determin the performance penalty induced by the dialog module. The actual script used for this scenario can be found here . Changed lines 73-74 from:

Call authentication was added on top of the previous scenario. 1000 subscribers were used, and a local MYSQL was used as the DB back-end. The actual script used for this scenario can be found here. to:

Call authentication was added on top of the previous scenario. 1000 subscribers were used, and a local MYSQL was used as the DB back-end. The purpose of this test was to see the performance penalty introduced by having the proxy authenticate users. The actual script used for this scenario can be found here. Changed lines 83-84 from:

This test used the same script as the previous one, the only difference being that there were 10 000 users in the subscribers table. to:

This test used the same script as the previous one, the only difference being that there were 10 000 users in the subscribers table. The purpose of this test was to see how the USRLOC module scales with the number of registered users. Changed lines 91-92 from:

In the test, OpenSIPS used the localcache module in order to do less database queries. The cache expiry time was set to 20 minutes, so during the test, all 10k registered subscribers have been in the cache. The actual script used for this scenario can be found here. to:

In the test, OpenSIPS used the localcache module in order to do less database queries. The cache expiry time was set to 20 minutes, so during the test, all 10k registered subscribers have been in the cache. The purpose of this test was to see how much DB queries are affecting OpenSIPS performance, and how much can caching help. The actual script used for this scenario can be found here. Changed lines 101-102 from:

This test had OpenSIPS running with 10k subscribers, with authentication ( no caching ), dialog aware and doing old type of accounting ( two entries, one for INVITE and one for BYE ). The actual script used for this scenario can be found here. to:

This test had OpenSIPS running with 10k subscribers, with authentication ( no caching ), dialog aware and doing old type of accounting ( two entries, one for INVITE and one for BYE ). The purpose of this test was to see the performance penalty introduced by having OpenSIPS do accounting. The actual script used for this scenario can be found here. Changed lines 111-112 from:

In this test, OpenSIPS was directly generating CDRs for each call, as opposed to the previous scenario. The actual script used for this scenario can be found here. to:

In this test, OpenSIPS was directly generating CDRs for each call, as opposed to the previous scenario. The purpose of this test was to see how the new type of accounting compares to the old one. The actual script used for this scenario can be found here. Changed lines 137-139 from:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | to:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | See full size chart March 07, 2011, at 05:46 PM

by -

Deleted lines 53-54:

March 07, 2011, at 05:46 PM

by -

Changed lines 53-55 from:

The 4th test had OpenSIPS running with the default script (provided by OpenSIPS distros). In this scenario, OpenSIPS can act as a SIP registrar, can properly handle CANCELs and detect traffic loops. OpenSIPS routed requests based on USRLOC, but only one subscriber was used. The purpose of this test was to see what is the performance penalty of a more advanced routing logic, taking into account the fact that the script used by this scenario is an enhanced version of the script used in 3.2. to:

The 4th test had OpenSIPS running with the default script (provided by OpenSIPS distros). In this scenario, OpenSIPS can act as a SIP registrar, can properly handle CANCELs and detect traffic loops. OpenSIPS routed requests based on USRLOC, but only one subscriber was used. The purpose of this test was to see what is the performance penalty of a more advanced routing logic, taking into account the fact that the script used by this scenario is an enhanced version of the script used in the 3.2 test . March 07, 2011, at 05:44 PM

by -

Changed lines 33-34 from:

In the second test, OpenSIPS script implements also the "Record-Route" mechanism, recording the path in initial requests, and then making sequential requests follow the determined path. to:

In the second test, OpenSIPS script implements also the "Record-Route" mechanism, recording the path in initial requests, and then making sequential requests follow the determined path. The purpose of this test was to see what is the performance penalty introduce by the mechanism of record and loose routing. Changed lines 43-44 from:

In the 3rd test we additionally made OpenSIPS dialog aware. The actual script used for this scenario can be found here . to:

In the 3rd test we additionally made OpenSIPS dialog aware. The purpose of this particular test was to determin the performance penalty introduced by the dialog module. The actual script used for this scenario can be found here . Changed line 53 from:

The 4th test had OpenSIPS running with the default script (provided by OpenSIPS distros). In this scenario, OpenSIPS can act as a SIP registrar, can properly handle CANCELs and detect traffic loops. OpenSIPS routed requests based on USRLOC, but only one subscriber was used. to:

The 4th test had OpenSIPS running with the default script (provided by OpenSIPS distros). In this scenario, OpenSIPS can act as a SIP registrar, can properly handle CANCELs and detect traffic loops. OpenSIPS routed requests based on USRLOC, but only one subscriber was used. The purpose of this test was to see what is the performance penalty of a more advanced routing logic, taking into account the fact that the script used by this scenario is an enhanced version of the script used in 3.2. March 07, 2011, at 05:33 PM

by -

Changed lines 6-7 from:

Several stress tests were performed using OpenSIPS 1.6.4 to simulate some real life scenarios, to get an idea on how much real life traffic can OpenSIPS handle and to see what is the performance penalty you get when using some OpenSIPS features like dialog support, authentication, accounting, etc. to:

Several stress tests were performed using OpenSIPS 1.6.4 to simulate some real life scenarios, to get an idea on the load that can be handled by OpenSIPS and to see what is the performance penalty you get when using some OpenSIPS features like dialog support, DB authentication, DB accounting, memory caching, etc. Changed line 24 from:

In this first test, OpenSIPS behaved as a simple stateful scenario, just passing messages from the UAC to the UAS. to:

In this first test, OpenSIPS behaved as a simple stateful scenario, just statefully passing messages from the UAC to the UAS ( a simple t_relay()). Changed lines 27-28 from:

In this scenario we managed to achieve 13000 CPS with an average load of 19.3 % ( actual load returned by htop ) to:

In this scenario we stopped the test at 13000 CPS with an average load of 19.3 % ( actual load returned by htop ) Changed line 33 from:

In the second test, OpenSIPS behaved like a loose router, recording the path in initial requests, and then making sequential requests follow the determined path. to:

In the second test, OpenSIPS script implements also the "Record-Route" mechanism, recording the path in initial requests, and then making sequential requests follow the determined path. Changed lines 36-37 from:

In this scenario we managed to achieve 12000 CPS with an average load of 20.6 % ( actual load returned by htop ) to:

In this scenario we stopped the test at 12000 CPS with an average load of 20.6 % ( actual load returned by htop ) Changed lines 42-45 from:

The 3rd test has OpenSIPS dialog aware. The actual script used for this scenario can be found here . In this scenario we managed to achieve 9000 CPS with an average load of 20.5 % ( actual load returned by htop ) to:

In the 3rd test we additionally made OpenSIPS dialog aware. The actual script used for this scenario can be found here . In this scenario we stopped the test at 9000 CPS with an average load of 20.5 % ( actual load returned by htop ) Changed lines 50-51 from:

The 4th test had OpenSIPS running with the default script. In this scenario, OpenSIPS can act as a SIP registrar, can properly handle CANCELs and detect traffic loops. OpenSIPS routed requests based on USRLOC, but only one subscriber was used. to:

The 4th test had OpenSIPS running with the default script (provided by OpenSIPS distros). In this scenario, OpenSIPS can act as a SIP registrar, can properly handle CANCELs and detect traffic loops. OpenSIPS routed requests based on USRLOC, but only one subscriber was used. Changed lines 54-55 from:

In this scenario we managed to achieve 9000 CPS with an average load of 17.1 % ( actual load returned by htop ) to:

In this scenario we stopped the test at 9000 CPS with an average load of 17.1 % ( actual load returned by htop ) Changed lines 62-63 from:

In this scenario we managed to achieve 9000 CPS with an average load of 22.3 % ( actual load returned by htop ) to:

In this scenario we stopped the test at 9000 CPS with an average load of 22.3 % ( actual load returned by htop ) Changed lines 68-71 from:

Call authentication was added on top of the previous scenario. 1000 subscribers were used, and MYSQL was used as the DB back-end. The actual script used for this scenario can be found here. In this scenario we managed to achieve 6000 CPS with an average load of 26.7 % ( actual load returned by htop ) to:

Call authentication was added on top of the previous scenario. 1000 subscribers were used, and a local MYSQL was used as the DB back-end. The actual script used for this scenario can be found here. In this scenario we stopped the test at 6000 CPS with an average load of 26.7 % ( actual load returned by htop ) Changed lines 78-79 from:

In this scenario we managed to achieve 6000 CPS with an average load of 30.3 % ( actual load returned by htop ) to:

In this scenario we stopped the test at 6000 CPS with an average load of 30.3 % ( actual load returned by htop ) Changed lines 86-87 from:

In this scenario we managed to achieve 6000 CPS with an average load of 18 % ( actual load returned by htop ) to:

In this scenario we stopped the test at 6000 CPS with an average load of 18 % ( actual load returned by htop ) Changed lines 94-95 from:

In this scenario we managed to achieve 6000 CPS with an average load of 43.8 % ( actual load returned by htop ) to:

In this scenario we stopped the test at 6000 CPS with an average load of 43.8 % ( actual load returned by htop ) Changed line 110 from:

In this scenario we managed to achieve 6000 CPS with an average load of 28.1 % ( actual load returned by htop ) to:

In this scenario we stopped the test at 6000 CPS with an average load of 28.1 % ( actual load returned by htop ) March 04, 2011, at 07:43 PM

by -

Changed line 132 from:

to:

March 04, 2011, at 07:18 PM

by -

Changed line 130 from:

to:

March 04, 2011, at 07:17 PM

by -

Added lines 125-126:

March 04, 2011, at 07:17 PM

by -

Changed lines 128-129 from:

to:

March 04, 2011, at 07:10 PM

by -

Changed lines 127-130 from:

TODO to:

March 04, 2011, at 07:10 PM

by -

Changed line 119 from:

Each particular test is described in the following way : Description [ + PrevTestID ] ( TestId ) to:

Each particular test is described in the following way : [ PrevTestID + ] Description ( TestId ) March 04, 2011, at 07:09 PM

by -

Changed lines 121-126 from:

Example : A test that adds dialog support on top of a previous test labeled as X would appear in the chart as >>blue<< X + Dialog ( Y ) to:

Example : A test that adds dialog support on top of a previous test labeled as X would appear in the chart as : X + Dialog ( Y ) Deleted lines 124-125:

March 04, 2011, at 07:09 PM

by -

Changed lines 121-122 from:

Example : A test that adds dialog support on top of a previous test labeled as X would appear in the chart as X + Dialog ( Y )>><<

to:

Example : A test that adds dialog support on top of a previous test labeled as X would appear in the chart as >>blue<< X + Dialog ( Y ) March 04, 2011, at 07:08 PM

by -

Changed line 122 from:

X + Dialog ( Y ) to:

X + Dialog ( Y )>><<

March 04, 2011, at 07:07 PM

by -

Added lines 118-124:

Each particular test is described in the following way : Description [ + PrevTestID ] ( TestId ) Example : A test that adds dialog support on top of a previous test labeled as X would appear in the chart as X + Dialog ( Y ) March 04, 2011, at 06:55 PM

by -

Changed line 119 from:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | Load Graph to:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | March 04, 2011, at 06:55 PM

by -

Changed lines 119-120 from:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | Detailed Load Chart to:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | Load Graph March 04, 2011, at 06:54 PM

by -

Changed line 119 from:

to:

March 04, 2011, at 06:53 PM

by -

Changed lines 119-121 from:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg" to:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg | Detailed Load Chart March 04, 2011, at 06:52 PM

by -

Changed line 119 from:

<img src="http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg" /> to:

http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg" March 04, 2011, at 06:51 PM

by -

Changed line 119 from:

to:

<img src="http://www.opensips.org/uploads/Resources/PerformanceTests/LoadGraph.jpg" /> March 04, 2011, at 06:50 PM

by -

Changed line 119 from:

to:

March 04, 2011, at 06:49 PM

by -

Changed lines 34-35 from:

The actual script used for this scenario can be found at TODO . to:

The actual script used for this scenario can be found here . Changed lines 42-43 from:

The 3rd test has OpenSIPS dialog aware. The actual script used for this scenario can be found at TODO . to:

The 3rd test has OpenSIPS dialog aware. The actual script used for this scenario can be found here . Changed lines 52-53 from:

The actual script used for this scenario can be found at TODO . to:

The actual script used for this scenario can be found here . Changed lines 60-61 from:

This scenario added dialog support on top of the previous one. The actual script used for this scenario can be found at TODO . to:

This scenario added dialog support on top of the previous one. The actual script used for this scenario can be found here . Changed lines 68-69 from:

Call authentication was added on top of the previous scenario. 1000 subscribers were used, and MYSQL was used as the DB back-end. The actual script used for this scenario can be found at TODO. to:

Call authentication was added on top of the previous scenario. 1000 subscribers were used, and MYSQL was used as the DB back-end. The actual script used for this scenario can be found here. Changed lines 84-85 from:

This test used the same script as the previous one, the only difference being that OpenSIPS used the localcache module in order to do less database queries. The cache expiry time was set to 20 minutes, so during the test, all 10k registered subscribers have been in the cache. to:

In the test, OpenSIPS used the localcache module in order to do less database queries. The cache expiry time was set to 20 minutes, so during the test, all 10k registered subscribers have been in the cache. The actual script used for this scenario can be found here. Changed lines 90-93 from:

Regular accountingThis test had OpenSIPS running with 10k subscribers, with authentication ( no caching ), dialog aware and doing old type of accounting ( two entries, one for INVITE and one for BYE ). The actual script used for this scenario can be found at TODO. to:

AccountingThis test had OpenSIPS running with 10k subscribers, with authentication ( no caching ), dialog aware and doing old type of accounting ( two entries, one for INVITE and one for BYE ). The actual script used for this scenario can be found here. Changed lines 100-101 from:

In this test, OpenSIPS was directly generating CDRs for each call, as opposed to the previous scenario. The actual script used for this scenario can be found at TODO. to:

In this test, OpenSIPS was directly generating CDRs for each call, as opposed to the previous scenario. The actual script used for this scenario can be found here. Changed lines 106-108 from:

CDR accountingIn the last test, OpenSIPS was generating CDRs just as in the previous test, but it was also caching the 10k subscribers it had in the MYSQL database. The actual script used for this scenario can be found at TODO. to:

CDR accounting + Auth CachingIn the last test, OpenSIPS was generating CDRs just as in the previous test, but it was also caching the 10k subscribers it had in the MYSQL database. March 04, 2011, at 06:44 PM

by -

Changed line 25 from:

The actual script used for this scenario can be found at TODO . to:

The actual script used for this scenario can be found at here . March 04, 2011, at 06:18 PM

by -

Changed line 6 from:

Several stress tests were performed using OpenSIPS 1.6.4 to emulate some real life scenarios, to get an idea on how much real life traffic can OpenSIPS handle and to see what is the performance penalty you get when using some OpenSIPS features like dialog support, authentication, accounting, etc. to:

Several stress tests were performed using OpenSIPS 1.6.4 to simulate some real life scenarios, to get an idea on how much real life traffic can OpenSIPS handle and to see what is the performance penalty you get when using some OpenSIPS features like dialog support, authentication, accounting, etc. March 04, 2011, at 06:12 PM

by -

Changed line 119 from:

TODO - Insert PIC to:

March 04, 2011, at 06:04 PM

by -

Added lines 121-123:

ConclusionsTODO March 04, 2011, at 06:03 PM

by -

Changed lines 115-120 from:

TODO to:

Each test had different CPS values, ranging from 13000 CPS, in the first test, to 6000 in the last tests. To give you an accurate overall comparison of the tests, we have scaled all the results up to the 13 000 CPS of the first test, adjusting the load in the same time. So, while on the X axis we have the time, the Y axis represents a function based on actual CPU load and CPS. TODO - Insert PIC March 04, 2011, at 05:45 PM

by -

Changed lines 29-30 from:

See chart, where this particular test is marked as test A. to:

See chart, where this particular test is marked as test A. Changed lines 38-39 from:

See chart, where this particular test is marked as test B. to:

See chart, where this particular test is marked as test B. Changed lines 46-47 from:

See chart, where this particular test is marked as test C. to:

See chart, where this particular test is marked as test C. Changed lines 56-57 from:

See chart, where this particular test is marked as test D. to:

See chart, where this particular test is marked as test D. Changed lines 64-65 from:

See chart, where this particular test is marked as test E. to:

See chart, where this particular test is marked as test E. Changed lines 72-73 from:

See chart, where this particular test is marked as test F. to:

See chart, where this particular test is marked as test F. Changed lines 80-81 from:

See chart, where this particular test is marked as test G. to:

See chart, where this particular test is marked as test G. Changed lines 88-89 from:

See chart, where this particular test is marked as test H. to:

See chart, where this particular test is marked as test H. Changed lines 96-97 from:

See chart, where this particular test is marked as test I. to:

See chart, where this particular test is marked as test I. Changed lines 104-105 from:

See chart, where this particular test is marked as test J. to:

See chart, where this particular test is marked as test J. Changed line 112 from:

See chart to:

See chart March 04, 2011, at 05:42 PM

by -

Changed lines 19-20 from:

A total of 11 tests were performed, using 11 different scripting scenarios. The goal was to achieve the highest possible CPS in the given scenario, store load samples from the OpenSIPS proxy and then analyze the results. to:

A total of 11 tests were performed, using 11 different scenarios. The goal was to achieve the highest possible CPS in the given scenario, store load samples from the OpenSIPS proxy and then analyze the results. Changed lines 98-112 from:

to:

CDR accountingIn this test, OpenSIPS was directly generating CDRs for each call, as opposed to the previous scenario. The actual script used for this scenario can be found at TODO. In this scenario we managed to achieve 6000 CPS with an average load of 38.7 % ( actual load returned by htop ) See chart, where this particular test is marked as test J. CDR accountingIn the last test, OpenSIPS was generating CDRs just as in the previous test, but it was also caching the 10k subscribers it had in the MYSQL database. The actual script used for this scenario can be found at TODO. In this scenario we managed to achieve 6000 CPS with an average load of 28.1 % ( actual load returned by htop ) See chart March 04, 2011, at 05:36 PM

by -

Deleted line 24:

Deleted line 33:

March 04, 2011, at 05:36 PM

by -

Changed lines 28-29 from:

In this scenario we managed to achieve 13000 CPS with an average load of 19.3% ( actual load returned by htop ) to:

In this scenario we managed to achieve 13000 CPS with an average load of 19.3 % ( actual load returned by htop ) Changed lines 38-39 from:

In this scenario we managed to achieve 12000 CPS with an average load of 20.6 ( actual load returned by htop ) to:

In this scenario we managed to achieve 12000 CPS with an average load of 20.6 % ( actual load returned by htop ) Changed lines 46-47 from:

In this scenario we managed to achieve 9000 CPS with an average load of 20.5 ( actual load returned by htop ) to:

In this scenario we managed to achieve 9000 CPS with an average load of 20.5 % ( actual load returned by htop ) Changed lines 52-53 from:

The 4th test had OpenSIPS running with the default script. In this scenario, OpenSIPS can act as a SIP registrar, can properly handle CANCELs and detect traffic loops. to:

The 4th test had OpenSIPS running with the default script. In this scenario, OpenSIPS can act as a SIP registrar, can properly handle CANCELs and detect traffic loops. OpenSIPS routed requests based on USRLOC, but only one subscriber was used. Changed lines 56-57 from:

In this scenario we managed to achieve 9000 CPS with an average load of 29.4 ( actual load returned by htop ) to:

In this scenario we managed to achieve 9000 CPS with an average load of 17.1 % ( actual load returned by htop ) Added lines 59-100:

Default Script with dialog supportThis scenario added dialog support on top of the previous one. The actual script used for this scenario can be found at TODO . In this scenario we managed to achieve 9000 CPS with an average load of 22.3 % ( actual load returned by htop ) See chart, where this particular test is marked as test E. Default Script with dialog support and authenticationCall authentication was added on top of the previous scenario. 1000 subscribers were used, and MYSQL was used as the DB back-end. The actual script used for this scenario can be found at TODO. In this scenario we managed to achieve 6000 CPS with an average load of 26.7 % ( actual load returned by htop ) See chart, where this particular test is marked as test F. 10k subscribersThis test used the same script as the previous one, the only difference being that there were 10 000 users in the subscribers table. In this scenario we managed to achieve 6000 CPS with an average load of 30.3 % ( actual load returned by htop ) See chart, where this particular test is marked as test G. Subscriber cachingThis test used the same script as the previous one, the only difference being that OpenSIPS used the localcache module in order to do less database queries. The cache expiry time was set to 20 minutes, so during the test, all 10k registered subscribers have been in the cache. In this scenario we managed to achieve 6000 CPS with an average load of 18 % ( actual load returned by htop ) See chart, where this particular test is marked as test H. Regular accountingThis test had OpenSIPS running with 10k subscribers, with authentication ( no caching ), dialog aware and doing old type of accounting ( two entries, one for INVITE and one for BYE ). The actual script used for this scenario can be found at TODO. In this scenario we managed to achieve 6000 CPS with an average load of 43.8 % ( actual load returned by htop ) See chart, where this particular test is marked as test I. March 04, 2011, at 05:19 PM

by -

Changed lines 48-49 from:

See chart, where this particular test is marked as test B. to:

See chart, where this particular test is marked as test C. Changed line 58 from:

to:

See chart, where this particular test is marked as test D. March 04, 2011, at 05:18 PM

by -

Changed lines 22-27 from:

Simple stateless proxyIn this first test, OpenSIPS behaved as a simple statefull scenario, just passing messages from the UAC to the UAS. The actual script used for this scenario can be found TODO . In this scenario we managed to achieve 13000 CPS with an average load of 19.3% ( actual load as returned by htop ) to:

Simple stateful proxyIn this first test, OpenSIPS behaved as a simple stateful scenario, just passing messages from the UAC to the UAS. The actual script used for this scenario can be found at TODO . In this scenario we managed to achieve 13000 CPS with an average load of 19.3% ( actual load returned by htop ) Added lines 31-57:

Stateful proxy with loose routingIn the second test, OpenSIPS behaved like a loose router, recording the path in initial requests, and then making sequential requests follow the determined path. The actual script used for this scenario can be found at TODO . In this scenario we managed to achieve 12000 CPS with an average load of 20.6 ( actual load returned by htop ) See chart, where this particular test is marked as test B. Stateful proxy with loose routing and dialog supportThe 3rd test has OpenSIPS dialog aware. The actual script used for this scenario can be found at TODO . In this scenario we managed to achieve 9000 CPS with an average load of 20.5 ( actual load returned by htop ) See chart, where this particular test is marked as test B. Default ScriptThe 4th test had OpenSIPS running with the default script. In this scenario, OpenSIPS can act as a SIP registrar, can properly handle CANCELs and detect traffic loops. The actual script used for this scenario can be found at TODO . In this scenario we managed to achieve 9000 CPS with an average load of 29.4 ( actual load returned by htop ) March 04, 2011, at 05:07 PM

by -

Changed line 28 from:

to:

See chart, where this particular test is marked as test A. March 04, 2011, at 05:06 PM

by -

Changed line 32 from:

TODo to:

TODO March 04, 2011, at 05:05 PM

by -

Changed lines 15-16 from:

The database back-end used was MYSQL to:

Each time the proxy ran with 32 children and the database back-end used was MYSQL. Changed lines 25-27 from:

The actual script used for this scenario can be found here . to:

The actual script used for this scenario can be found TODO . In this scenario we managed to achieve 13000 CPS with an average load of 19.3% ( actual load as returned by htop ) March 04, 2011, at 05:00 PM

by -

Added line 21:

Added lines 23-26:

In this first test, OpenSIPS behaved as a simple statefull scenario, just passing messages from the UAC to the UAS. The actual script used for this scenario can be found here . March 04, 2011, at 04:57 PM

by -

Added lines 16-25:

Performance testsA total of 11 tests were performed, using 11 different scripting scenarios. The goal was to achieve the highest possible CPS in the given scenario, store load samples from the OpenSIPS proxy and then analyze the results. Simple stateless proxyLoad statistics graphTODo March 04, 2011, at 03:30 PM

by -

Changed lines 9-15 from:

What scenarios were usedto:

What hardware was used for the stress testsThe OpenSIPS proxy was installed on an Intel i7 920 @ 2.67GHz CPU with 6 Gb of available RAM. The UAS and UACs resided on the same LAN as the proxy, to avoid network limitations. What script scenarios were usedThe base test used was that of a simple stateful SIP proxy. Than we kept adding features on top of this very basic configuration, features like loose routing, dialog support, authentication and accounting. The database back-end used was MYSQL March 04, 2011, at 03:22 PM

by -

Added lines 5-6:

Several stress tests were performed using OpenSIPS 1.6.4 to emulate some real life scenarios, to get an idea on how much real life traffic can OpenSIPS handle and to see what is the performance penalty you get when using some OpenSIPS features like dialog support, authentication, accounting, etc. March 04, 2011, at 03:14 PM

by -

Changed lines 4-7 from:

to:

What scenarios were usedMarch 04, 2011, at 03:12 PM

by -

Changed lines 1-4 from:

TODO to:

Resources -> Performance Tests -> Stress TestsThis page has been visited 5225 times. (:toc-float Table of Content:) March 04, 2011, at 03:09 PM

by -

Added line 1:

TODO |